Context

This repo is a competitive AI workspace for a CodinGame Snakebird challenge. Instead of only writing one bot, I built an environment for repeated experimentation: referee logic, local simulation, visual debugging, benchmark harnesses, and multiple bot families.

What I built

The project combines game infrastructure and strategy iteration.

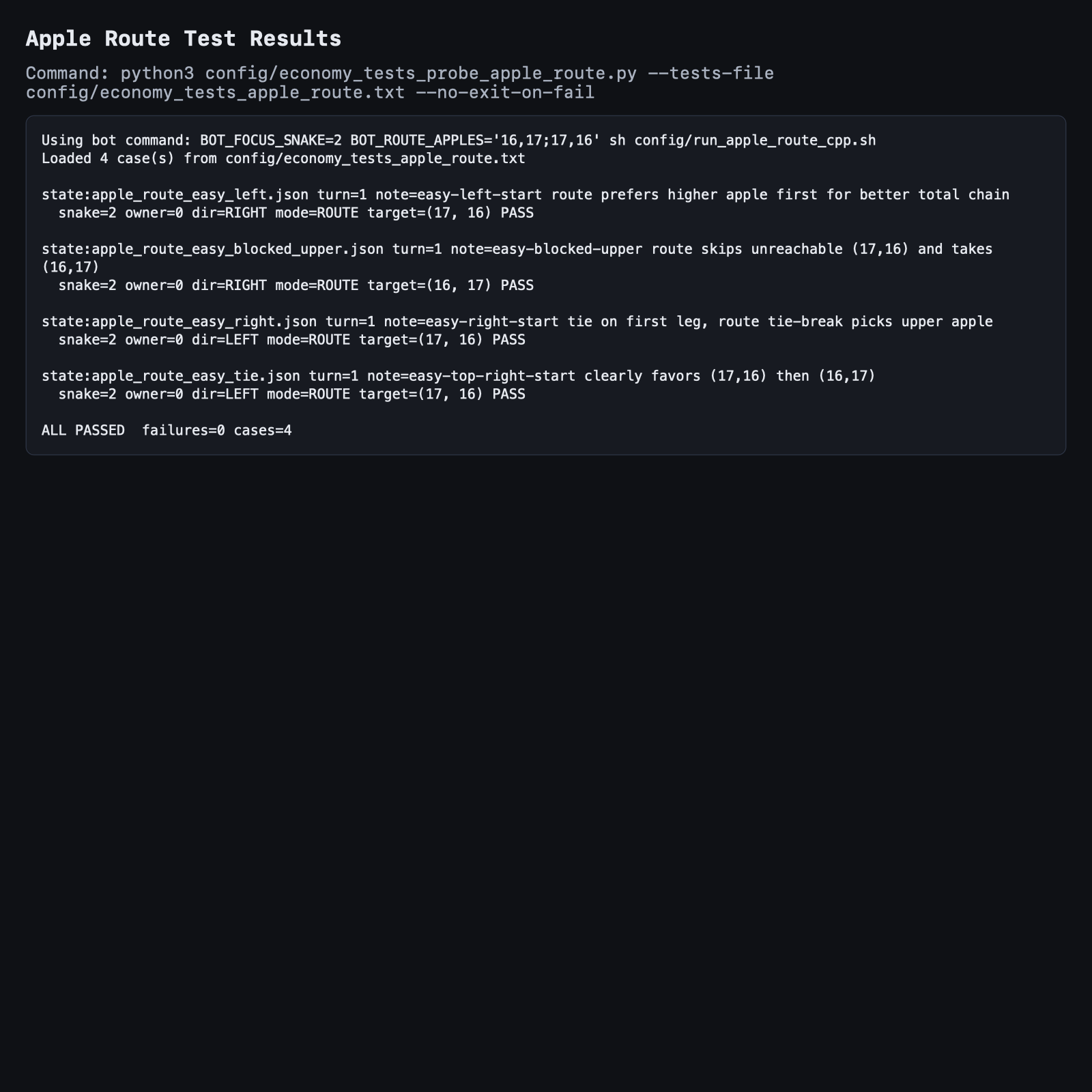

A report view from the experimentation workflow used to compare pathing behavior and strategy changes visually.

- A local referee and simulation environment for the game rules

- A visual client and replay tooling to inspect behavior turn by turn

- Multiple bot families with different planning strategies

- Repeatable benchmark and regression reports exported as HTML artifacts

Technical focus

The strongest bot work centered on path planning and safety evaluation.

- A*-style routing under tight runtime constraints

- Danger scoring for chokepoints, boundaries, and enemy races to contested apples

- Ally-aware planning ideas for coordination instead of purely selfish routing

- Profiling and isolated component tests to understand which heuristics were helping

Why this project is useful

I like projects like this because they force a balance between algorithm quality and engineering workflow. Building a stronger bot was only part of the problem. I also needed a good debugging loop and meaningful benchmarks so I could improve decisions systematically.

Another example of the reporting layer that made strategy iteration more disciplined than simple ladder play.

Engineering decisions

The project became much more useful once the benchmarking and reporting workflow was strong enough to evaluate changes repeatedly. In this kind of environment, good tooling is a major part of building a better bot.